Thoughts on the state of Machine Learning Engineering

Posted on za 08 oktober 2022 in Blog

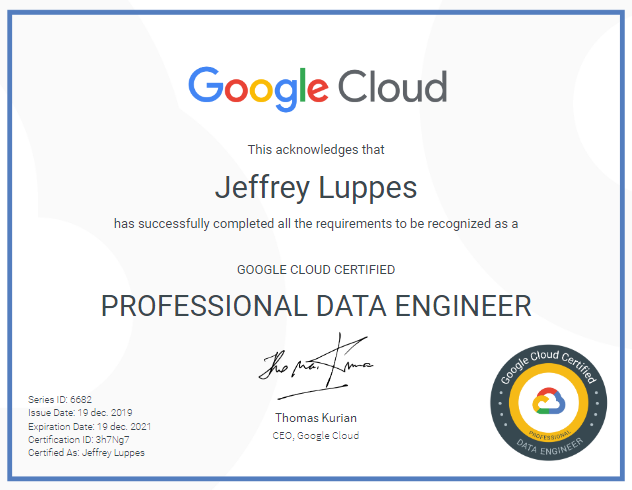

In 2020 I wrote this piece on becoming a ML Engineer. Since then a lot has changed. The job title of ML Engineer is now a relatively common one - a search in October 2022 for Data Scientist and Machine Learning Engineer openings in my home country, the Netherlands, showed the …

Continue reading